Artificial intelligence (AI) applications are becoming increasingly prevalent in social systems, such as the digital economy and urban mobility. They are now considered socio-technical systems, including human and AI-powered decision-makers. This raises new questions about how AI-powered decision-makers will interact with each other and with humans in such emerging dynamical systems and how we can apply control-theoretic methodologies for their reliable integration into our society. Therefore, at the GDN Lab,

- We focus on developing a foundational understanding of learning and autonomy in complex, dynamic, and multi-agent systems.

- We develop new methodologies for analyzing, controlling, and optimizing socio-technical systems.

- We apply these methodologies to specific problems in urban mobility, robotics, and the digital economy.

See the following posters and the video recordings as examples of our recent research projects:

We are looking for new team members. Please get in touch with us if you are interested in!

Recent News

- [Jun. 2025] Our paper “Logit-Q dynamics for efficient learning in stochastic teams” got accepted to the SIAM Journal on Control and Optimization.

- [May 2025] Our paper “Convergence of heterogeneous learning dynamics in zero-sum stochastic games” got accepted to the IEEE Transactions on Automatic Control.

- [May 2025] Our joint work “Dynamic feedback strategies for duopolies over partially observed consumer networks” with Dr. Saeed Ahmed from University of Groningen got accepted to the Dynamic Games and Applications.

- [Mar. 2025] Dr. Sayin gave an invited talk titled “Multi-team reinforcement learning” at the Algorithmic Learning in Games Seminar (ALIGS) Series.

- [Nov. 2024] Our paper “Balancing anarchy and efficiency: partial team formations and learning in potential games” got accepted to the Turkish Journal of Electrical Engineering & Computer Sciences.

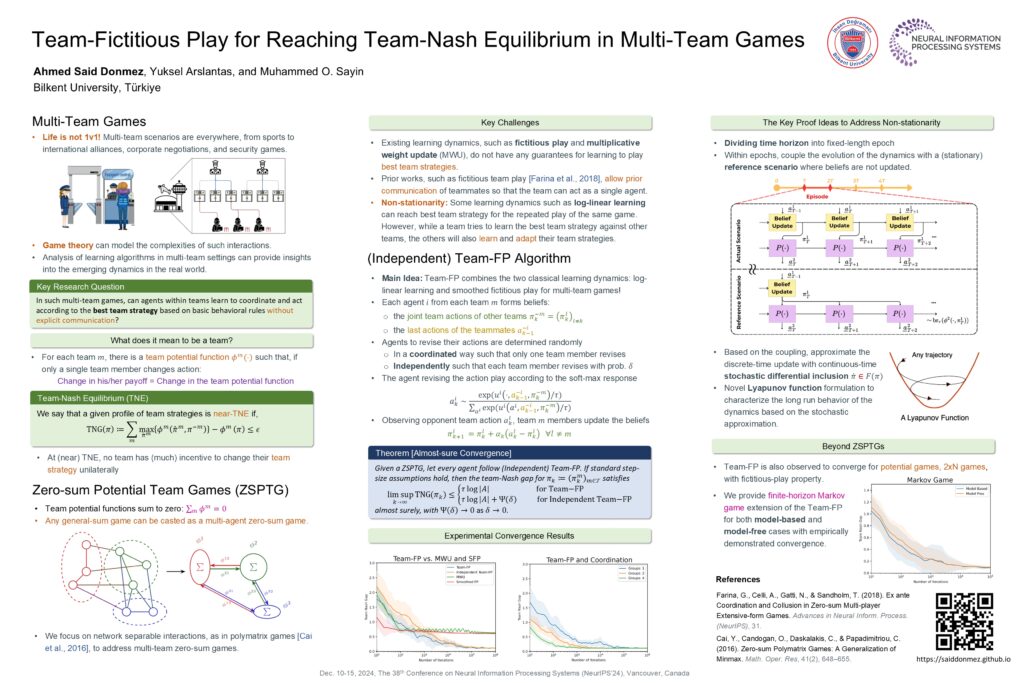

- [Sep. 2024] Our paper “Team-fictitious play for reaching team-Nash equilibrium in multi-team games” will appear in NeurIPS’24.

- [Sep. 2024] Our papers “Strategic control of intersections for efficient traffic routing without tolls” and “Strategic control of experience-weighted attraction model in human-AI interactions” will appear in IFAC CPHS’24.

- [Aug. 2024] Yuksel Arslantas presented his M.S. Thesis “Heterogeneity and strategic sophistication in multi-agent reinforcement learning” successfully.

- [July 2024] Our paper “Generalized individual Q-learning for polymatrix games with partial observations” will appear in IEEE CDC’24.